AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Direct mapped vs set associative7/31/2023

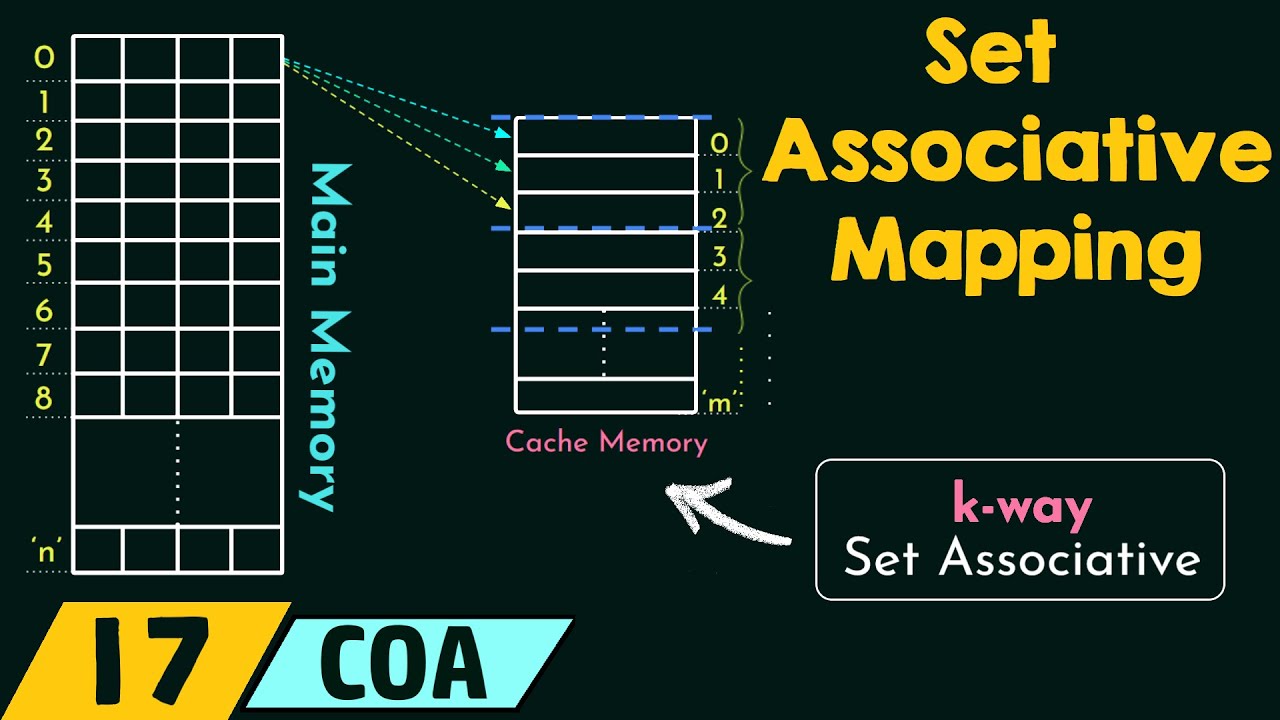

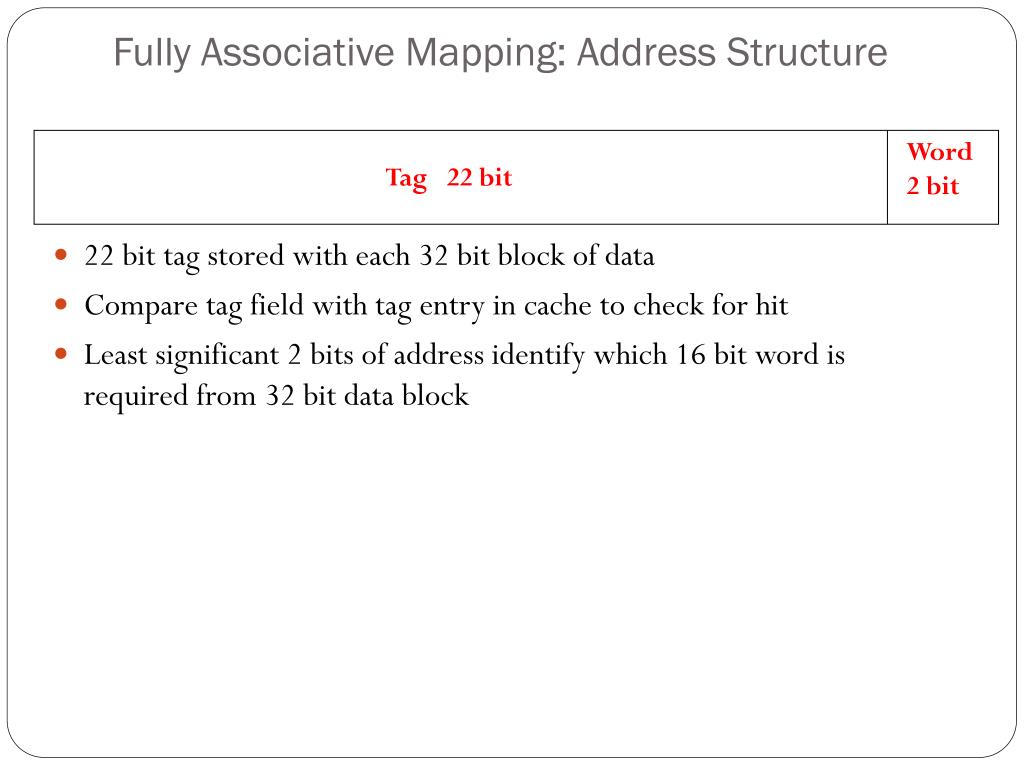

Mem = 21763 10 Write buffers Write-through caches can result in slow writes, so processors typically include a write buffer, which queues pending writes to main memory and permits the CPU to continue … Buffers are commonly used when two devices run at different speeds If a producer generates data too quickly for a consumer to handle, the extra data is stored in a buffer and the producer can continue on with other tasks, without waiting for the consumer Conversely, if the producer slows down, the consumer can continue running at full speed as long as there is excess data in the buffer For us, the producer is the CPU and the consumer is the main memory Buffer Producer Consumer 6 11 Write buffers Write-through caches can result in slow writes, so processors typically include a write buffer, which queues pending writes to main memory and permits the CPU to continue … Notice that the write buffer allows the CPU to continue before the write is complete, but write-through has the problem: It uses memory bandwidth Write Buffer CPU Memory int sum_array_rows(int a) 12 Write-back caches In a write-back cache, the memory is not updated until the cache block needs to be replaced (e.g., when loading data into a full cache set) For example, we might write some data to the cache at first, leaving it inconsistent with the main memory as shown before The cache block is marked “dirty” to indicate this inconsistency Subsequent reads to the same memory address will be serviced by the cache, which contains the correct, updated data Index Tag Data Dirty Address. The bad thing is that forcing every write to go to main memory, we use up bandwidth between the cache and the memory. This is simple to implement and keeps the cache and memory consistent. 2k Index Tag Data Valid Address (m bits) = Hit k (m-k-n) Tag 2-to-1 mux Data 2n Tag Valid Data 2n 2n = Index Block offset Compare a 2-way cache set associative cache with a fully-associative cache? Only 2 comparators needed Cache tags are a little shorter too … deciding replacement? 2 3 Set associative caches are a general idea By now you have noticed the 1-way set associative cache is the same as a direct-mapped cache Similarly, if a cache has 2k blocks, a 2k-way set associative cache would be the same as a fully- associative cache 0 1 2 3 4 5 6 7 Set 0 1 2 3 Set 0 1 Set 1-way 8 sets, 1 block each 2-way 4 sets, 2 blocks each 4-way 2 sets, 4 blocks each 0 Set 8-way 1 set, 8 blocks direct mapped fully associative 4 Summary Larger block sizes can take advantage of spatial locality by loading data from not just one address, but also nearby addresses, into the cache Associative caches assign each memory address to a particular set within the cache, but not to any specific block within that set Set sizes range from 1 (direct-mapped) to 2k (fully associative) Larger sets and higher associativity lead to fewer cache conflicts and lower miss rates, but they also increase the hardware cost In practice, 2-way through 16-way set-associative caches strike a good balance between lower miss rates and higher costs Next, we’ll talk more about measuring cache performance, and also discuss the issue of writing data to a cache 5 9 Write-through caches A write-through cache solves the inconsistency problem by forcing all writes to update both the cache and the main memory. 1022 1023 Index Tag Data Valid Address (32 bits) = Hit 10 20 Tag 2 bits Mux Data 8 8 8 8 8 2 2-way set associative implementation 0. Patterson, D.A., Hennesy, J.L.: Computer Organization and Design, 2nd edn.Download lab on cache direct mapping, set associative and more Computer Science Exercises in PDF only on Docsity!1 1 Review How is this cache different if… - the block is 4 words? - the index field is 12 bits? 0 1 2 3.

In: IEEE/ACM International Symposium on Microarchitecture (MICRO-30), pp. Kin, J., Gupta, M., Mangione-Smith, W.H.: The Filter Cache: An Energy Efficient Memory Structure. In: 2 nd IEEE ICCSIT 2009, Beijing, China (2009) Megalingam, R.K., Deepu, K.B., Joseph, I.P., Vikram, V.: Phased Set Associative Cache Design For Reduced Power Consumption.

Hasegawa, A., et al.: SH3: High Code Density, Low Power. Koji, I., Tohru, I., Kazuaki, M.: Way Predicting Set Associative Cache for High Performance and Low Energy Consumption. In: 14th Annual International Symposium on Computer Architecture, SIGARCH Newsletter, June 1987, pp. In: Proceedings of Second International Symposium on High-Performance Computer Architecture, February 1996, pp. Calder, B., Grunwald, D., Emer, J.: Predictive Sequential Associative Cache.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed